AlphaBrain Documentation¶

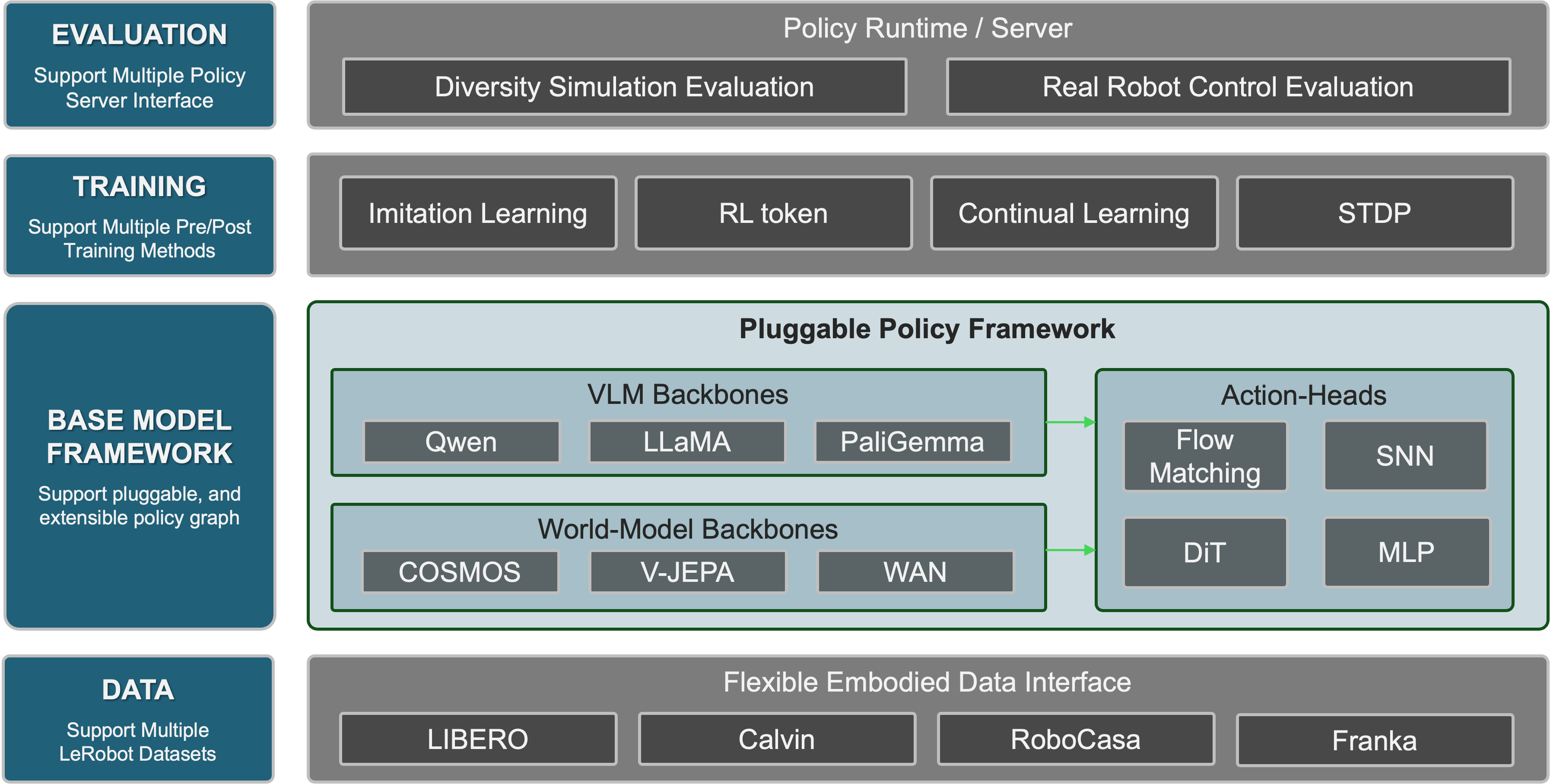

AlphaBrain is the world’s first all-in-one, open-source community for embodied intelligence, built to be ready out of the box. We unifies multiple VLA architectures, world model backbones, biologically-inspired learning algorithms, and reinforcement learning paradigms under a single, extensible framework. AlphaBrain brings embodied AI within everyone’s reach.

Where to go next¶

-

Set up conda envs, Flash Attention, pretrained weights, dataset, and

.env— everything you need before the first run. -

Start with Baseline VLA for the default finetune + eval, then pick a capability: NeuroVLA, RL-Token, World Model, or Continual Learning.

-

API reference for every public class and function in

AlphaBrain/.

Key capabilities¶

| Capability | Summary | Quickstart |

|---|---|---|

| Baseline VLA | PaliGemmaOFT / PaliGemmaPi05 / LlamaOFT finetune + LIBERO eval. | Baseline VLA |

| NeuroVLA | Brain-inspired VLA with Spiking Neural Network action head and R-STDP learning. | NeuroVLA |

| RL-Token | Off-policy TD3 online RL fine-tuning with an information-bottleneck encoder. | RL-Token |

| World Model | V-JEPA / Cosmos / Wan backbones with pluggable GR00T / OFT / PI decoders. | World Model |

| Continual Learning | Experience-replay fine-tuning across 10 LIBERO tasks (LoRA or full-param). | Continual Learning |

Project links¶

- Source: github.com/AlphaBrainGroup/AlphaBrain

- Issues: Report bugs or request features

- License: MIT